In 2026, brand visibility is no longer about keywords, but about becoming a verified entity in the global AI Knowledge Vault. With the knowledge graph market projected to hit $1.98 billion this year, you’ve likely noticed your organic traffic dwindling as users pivot to AI agents that deliver answers without ever clicking a link. It’s frustrating to be ignored by chatbots during the discovery phase because your data lacks the semantic connections these models require. Mastering knowledge graph optimization for ai is no longer optional if you want to avoid being filtered out by the GraphRAG architectures that ground every major LLM output.

We’re here to bridge the gap between technical confusion and brand authority. You’ll learn how to structure your business data into semantic knowledge graphs that ensure AI models like ChatGPT recognize and recommend your brand every time. This article provides a clear roadmap for AI-ready data structuring that leverages the latest Neo4j 2026.04.0 updates and complies with the Colorado AI Act taking effect on June 30, 2026. We’ll show you how to move from being a simple search result to a verified fact in the 2026 AI landscape.

Key Takeaways

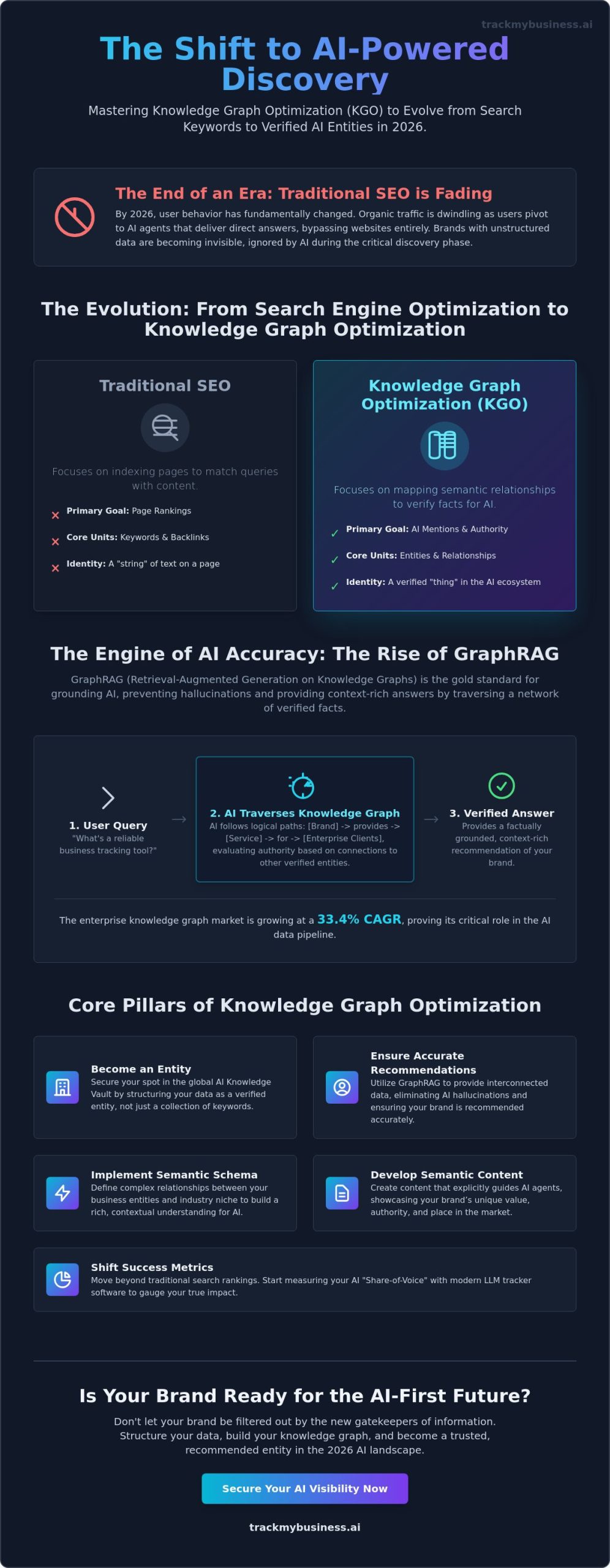

- Understand why traditional SEO is evolving into entity-based knowledge graph optimization for ai to secure your spot in the global AI Knowledge Vault.

- Discover how GraphRAG architectures utilize interconnected data to eliminate AI hallucinations and ensure your brand is recommended accurately.

- Learn to implement advanced semantic schema mapping that defines complex relationships between your business entities and industry niche.

- Develop a specific “Semantic Content” strategy that explicitly guides AI agents in recognizing your brand’s unique value and authority.

- Shift your success metrics from traditional search rankings to AI share-of-voice using modern LLM tracker software.

The Evolution of Search: Why Knowledge Graphs are the New SEO in 2026

The search landscape has fundamentally fractured. By May 2026, users aren’t just scrolling through lists of blue links; they’re conversing with sophisticated agents that synthesize information in real time. Traditional SEO, which relied heavily on keyword density and backlink counts, is no longer the primary driver of brand growth. Instead, the focus has shifted toward knowledge graph optimization for ai. This strategy ensures that your brand isn’t just a “string” of text on a page, but a verified “thing” in a digital ecosystem. Knowledge Graph Optimization (KGO) is the bridge between raw data and AI intelligence.

To understand this shift, we must first look at what a knowledge graph is: a network of interconnected entities and their relationships. Large Language Models (LLMs) use these graphs to ground their outputs in factual reality. Without this structure, AI models are prone to hallucinations. By May 2026, the global knowledge graph market has reached $1.98 billion, reflecting how critical this infrastructure has become for brand survival. If your business data isn’t structured for AI consumption, you don’t exist in the eyes of the most influential recommendation engines.

Traditional SEO vs. AI Knowledge Graph Optimization

Traditional SEO focused on indexing pages so that search engines could match queries to content. KGO is different; it’s about mapping semantic relationships. While a search engine might index a blog post about “tracker software,” an AI knowledge graph identifies the brand as a specific entity that “provides” a “service” to “enterprise clients.” This distinction is vital because AI models prioritize verified entities over high-traffic landing pages. Trust and authority in the semantic web are built through consistent, structured data that confirms your brand’s identity across multiple platforms. With the EU AI Act’s high-risk system obligations coming into force on August 2, 2026, being a “verified entity” also helps meet new transparency and compliance standards.

The Business Impact of Being “Graph-Ready”

Being “graph-ready” directly influences how often and how accurately your brand appears in AI responses. When your data is part of a structured graph, AI agents can recommend your products with higher confidence, significantly reducing customer acquisition costs. You’re no longer fighting for a spot on page one; you’re securing a position as a factual answer. This transition requires a move away from flat data toward the interconnected architectures found in tools like Neo4j 2026.04.0. Businesses that adopt knowledge graph optimization for ai see a measurable increase in brand mentions within LLM outputs, turning their data into a competitive moat that traditional competitors can’t easily cross.

- AI models prioritize “entities” over “keywords” to ensure factual accuracy.

- Knowledge graphs reduce LLM hallucinations by providing a verifiable “ground truth.”

- Structured data lowers the friction for AI agents to recommend your brand to users.

How Knowledge Graphs Power LLMs: The Rise of GraphRAG

In 2025, simple Retrieval-Augmented Generation (RAG) was the standard for grounding AI. However, by May 2026, flat vector databases have revealed their limitations. Traditional RAG often retrieves disconnected snippets of text that lack the deep context required for complex reasoning. This is why GraphRAG has emerged as the gold standard for AI accuracy. By combining the retrieval power of RAG with the structured relationships of a knowledge graph, AI models can now provide answers that are both factually grounded and contextually rich. This evolution makes knowledge graph optimization for ai the most critical technical hurdle for brands wanting to remain visible in LLM responses.

When an AI “traverses” a graph, it doesn’t just look for keywords. It follows a path of logic. If a user asks for a reliable business tracking tool, the AI moves through a network of nodes. It identifies your brand, sees the “edges” or relationships connecting you to specific services, and evaluates your authority based on how many other verified entities point back to you. This structured traversal is what prevents the AI from hallucinating a competitor’s name in place of yours. The enterprise knowledge graph market is currently growing at a CAGR of 33.4%, proving that businesses are rapidly shifting toward these interconnected architectures to feed AI agents the high-quality data they crave.

Understanding the Node-Edge-Node Framework

To succeed in this landscape, you must break your business data down into a “Node-Edge-Node” framework. In this model, nodes represent entities like your products, founders, or core values. The edges are the predicates that define the relationship. For example, a properly optimized graph would contain the triplet: [TrackMyBusiness] -> [offers] -> [ChatGPT mention tracking]. By explicitly defining these connections, you remove the guesswork for the LLM. Using an LLM tracker software allows you to see exactly how these structured relationships translate into actual brand mentions in real time.

From Unstructured Chaos to Semantic Clarity

AI models are now sophisticated enough to convert your unstructured blog posts and landing pages into graph triplets. However, they require consistency to do this accurately. If your site refers to “Tracker Software” in one place and “Business Monitoring Tools” in another without a clear semantic link, the AI might fail to unify these into a single entity. Since the ISO adopted GQL as the standard graph query language in 2024, the industry has moved toward extreme precision. Knowledge graph optimization for ai ensures that your digital footprint is seen as a singular, authoritative entity rather than a collection of random pages. This clarity is what allows AI agents to recommend your brand with 100% confidence.

- GraphRAG provides the logical “connective tissue” that flat data lacks.

- Nodes represent your brand’s facts; edges represent the context.

- Consistent entity naming is the only way to ensure AI models unify your brand data correctly.

Core Pillars of Knowledge Graph Optimization for AI

Effective knowledge graph optimization for ai rests on more than just adding a few lines of code to your header. It requires a fundamental shift in how you broadcast your brand’s identity to the world. In 2026, AI models don’t just read your site; they ingest it as a series of verified facts. This process starts with Entity Identification, where you define the core digital footprint of your brand. You aren’t just a website; you’re a node in a global network. By establishing clear provenance, you ensure that AI models view your data as the primary source of truth rather than relying on potentially hallucinated third-party summaries.

Data interconnectedness is the next critical step. It involves linking your proprietary data to the wider world. When your site is properly mapped, AI agents can trace the lineage of your information back to authoritative sources. This creates a “trust loop” that reinforces your brand authority. Without these pillars, even the best content remains invisible to the GraphRAG architectures that now dominate the AI landscape. You must treat your business data as a living, breathing map that AI can navigate with 100% confidence.

Advanced Schema.org Strategies for 2026

Basic JSON-LD is the baseline, but 2026 demands deep attribute nesting. You should use the “SameAs” property to explicitly link your brand to existing entries in authoritative databases. This creates a bridge between your proprietary data and the wider semantic web. For technical industries, implementing “DefinedTermSet” is crucial. While a garment manufacturer might use this to define specific textile grades, a software provider uses it to clarify technical jargon like “LLM mention tracking.” This level of detail in your “Organization” and “Product” schema helps AI agents understand the specific context of your offerings without any ambiguity.

External Graph Integration

Your brand doesn’t exist in a vacuum. To be recognized by Google’s Knowledge Vault or the underlying datasets powering Claude and ChatGPT, you must link your internal graph to external ones like Wikidata and DBpedia. These platforms act as the connective tissue of the internet. When you secure a mention on an authoritative industry site, it functions as a new “edge” in your brand graph. This external validation is critical. In 2026, AI agents weigh these external connections heavily to determine if a brand is a verified entity. The BFSI sector is currently leading this trend, with its use of enterprise knowledge graphs expected to grow at a CAGR of 23.1% through 2033.

- Use “SameAs” properties to link your site to established Wikidata or DBpedia entities.

- Implement deep nesting in “Organization” schema to define founders, locations, and parent companies.

- Ensure your data provenance is clear so AI models prioritize your site as the “source of truth.”

Practical Steps to Influence AI Mentions and Brand Authority

Moving from theory to execution requires a tactical shift. In 2026, brand visibility is a byproduct of how well AI agents can parse your facts. If you haven’t started knowledge graph optimization for ai, your brand is likely invisible to the 22.9% of the market currently shifting toward these architectures. You must treat every piece of content as a potential node in a larger web. This isn’t about gaming an algorithm; it’s about providing the clear, structured data that AI models need to function without hallucinating. By being proactive, you ensure that your business isn’t just a search result, but a foundational fact in the AI’s internal logic.

The “Entity-First” Content Audit

Start with an “Entity-First” content audit. AI models are trained to spot contradictions across the entire web. If your website describes your “Tracker Software” as a financial tool but your LinkedIn profile calls it an operations platform, the AI might lower your authority score to avoid spreading misinformation. Standardize these descriptions across all platforms. By June 30, 2026, when the Colorado AI Act takes full effect, having clear, non-discriminatory data structures will also be a compliance necessity for high-risk systems. Eliminating “orphaned data” that doesn’t connect back to your main brand node is the only way to ensure your digital footprint remains cohesive.

Structuring Content for AI Extraction

AI agents prefer fact-dense triplets that they can easily extract. Instead of writing “Our software is great for many things,” write “TrackMyBusiness provides LLM tracker software for enterprise visibility.” This structure is easy for GraphRAG models to ingest and store. Use FAQs to provide direct, relationship-based answers. For example, “What does TrackMyBusiness track?” followed by “TrackMyBusiness tracks brand mentions in LLM outputs.” This directness helps AI models map your services to specific user needs with high confidence levels.

This strategy works across various industries by turning abstract concepts into concrete data points. TrackMyBusiness helps garment manufacturers manage “inventory” as a traceable entity by linking supply chain data directly to their brand’s knowledge graph. By defining these relationships explicitly, manufacturers ensure that AI agents recognize their specific role in the production cycle. This prevents the AI from confusing a manufacturer with a logistics provider, which is a common error in unstructured data environments.

Finally, you can’t optimize what you don’t measure. Traditional rank tracking is dead because AI responses are dynamic and personalized. You need to see how ChatGPT and Claude actually interpret and mention your brand in natural conversation. You should use ChatGPT mention tracking to audit your brand’s presence and adjust your semantic strategy based on real-world AI behavior. Regular testing ensures that your data remains the “source of truth” as LLM models update their training sets throughout 2026.

- Audit all social and web profiles to ensure entity descriptions are 100% consistent.

- Rewrite key service pages using fact-dense triplets that define “Who,” “What,” and “For Whom.”

- Implement a “Semantic Content” strategy that uses FAQs to answer specific relationship-based queries.

- Monitor AI share-of-voice to identify where your knowledge graph needs more “edges” or connections.

Tracking AI Mentions: Measuring Your Knowledge Graph Impact

The era of tracking blue links is over. By May 2026, organic traffic from traditional search engines has plummeted for many sectors, replaced by direct answers from AI agents. This shift makes knowledge graph optimization for ai the only viable path to visibility, but it also renders traditional rank tracking obsolete. You need a way to verify that your semantic data is actually being consumed and cited by models like ChatGPT and Claude. LLM mention tracking has become the primary KPI for digital marketers who want to prove the ROI of their structured data investments. Without these metrics, you’re essentially building a map that no one is using.

TrackMyBusiness provides the necessary LLM tracker software to close this feedback loop. By monitoring how AI models interpret your brand’s entities, you can see which relationships are strong and which are failing to register. If an AI model correctly identifies your brand but fails to link it to your core product, your knowledge graph has a missing “edge.” Identifying these gaps is the first step toward refining your semantic strategy for the 2026 landscape. With the global knowledge graph market projected to reach $1.98 billion this year, the ability to audit your brand’s presence in the AI vault is a major competitive advantage.

The New Analytics: Share of Model (SoM)

Share of Model (SoM) is the most critical metric in 2026. It represents the frequency of your brand mentions within AI-generated responses compared to your direct competitors. Using TrackMyBusiness, you can identify exactly which parts of your knowledge graph are being ignored by current model versions. For instance, if your brand appears in 35% of queries related to “business tracking” but 0% of queries for “AI transparency,” you know where to focus your next round of schema updates. Correlating knowledge graph optimization for ai with actual recommendation volume allows you to treat your data as a dynamic asset rather than a static website.

Leveraging the Tracker Ecosystem for AI Dominance

Your corporate knowledge graph shouldn’t just rely on marketing copy. In 2026, the most authoritative brands feed their graphs with integrated production management data. This transitions your business from simple internal transparency to external AI visibility. When your Tracker Software provides verifiable data points about your operations, AI models can cite those facts with higher confidence scores. This transparency is particularly important as the Texas Responsible AI Governance Act and California AI Transparency Act both take effect on January 1, 2026, requiring clearer disclosure and data provenance. By integrating these facts into your graph, you move from being a “suggested” brand to a “verified” one.

See how TrackMyBusiness tracks your brand mentions in AI models today to ensure your business remains a verified entity in every conversation.

- Transition from tracking “rankings” to “Share of Model” to understand your AI market share.

- Identify missing semantic edges by analyzing which brand attributes AI models fail to mention.

- Use real-time LLM tracker software to adjust your knowledge graph before the next model training cycle.

- Comply with 2026 transparency acts by providing verifiable data provenance through your graph.

Own Your Entity in the 2026 AI Ecosystem

The transition from keyword-based search to entity-based discovery is the most significant shift in digital marketing since the mobile revolution. By implementing knowledge graph optimization for ai, you’ve moved beyond hope-based marketing and into the realm of data-driven authority. You now understand that your brand’s visibility depends on providing a clear, structured roadmap that AI models can verify through GraphRAG architectures. With the BFSI sector’s adoption growing at 23.1% through 2033, the race to secure a spot in the global knowledge vault is accelerating across every industry.

Don’t let your brand become a hallucination or a missed opportunity. Our cloud-based modular system provides the total operational transparency needed to feed these complex graphs. Trusted by garment and embroidery businesses worldwide, our specialized LLM tracker software offers real-time monitoring of how you appear in AI responses. You can start tracking your AI brand mentions with TrackMyBusiness today to ensure your optimized data is actually delivering measurable results. The tools are ready, and the 2026 landscape is yours to claim.

Frequently Asked Questions

What is the difference between SEO and Knowledge Graph Optimization?

Traditional SEO focuses on ranking specific URLs for keyword queries in search engines like Google. Knowledge graph optimization for ai shifts that focus toward defining your brand as a unique entity with clear relationships to other concepts. While SEO helps a user find a webpage, KGO helps an AI model understand that your business is the factual answer to a user’s problem.

How long does it take for AI models like ChatGPT to recognize my graph updates?

Recognition time varies based on the model’s update cycle and the refresh rate of its GraphRAG system. While foundational training can take months, models utilizing real-time retrieval can often see structured data updates within 2 to 4 weeks. Consistency across your digital footprint accelerates this process by providing the model with high-confidence signals.

Do I need a technical background to optimize my business for AI knowledge graphs?

You don’t need to be a data scientist to start, but you do need tools that automate semantic mapping. Most businesses use specialized software to handle the complex JSON-LD and relationship tagging required for 2026 standards. The focus is more on data accuracy and entity consistency than writing manual code for graph databases like Neo4j 2026.04.0.

Can Knowledge Graph Optimization help my business if I don’t have a Wikipedia page?

Yes, you can build authority by linking your site to other established nodes like LinkedIn, Crunchbase, or specialized industry directories. Using “SameAs” properties in your schema to point toward these verified profiles creates a trust signal similar to a Wikipedia entry. This strategy is essential for the 22.9% of businesses currently scaling their AI visibility without a massive public profile.

What are the most important schema types for AI visibility in 2026?

The “Organization” and “Product” schema types remain foundational, but “DefinedTermSet” has become critical for industry-specific clarity. These types allow you to define technical jargon or unique business processes that AI might otherwise misunderstand. Adding deep attribute nesting to these schemas ensures that models like Claude or ChatGPT can map your specific value proposition accurately.

How does TrackMyBusiness track mentions in closed-source AI models like ChatGPT?

We use proprietary LLM tracker software that queries models through automated, natural language prompts at scale. This allows us to see how an AI model cites your brand in a conversational context. By analyzing these responses, we can calculate your Share of Model (SoM) and identify which parts of your knowledge graph need more optimization.

Is Knowledge Graph Optimization expensive for small businesses?

The primary cost is time spent on data auditing rather than high software fees. Small businesses can start knowledge graph optimization for ai by standardizing their entity information across free platforms and social profiles. This foundational work creates the “source of truth” that AI models require, making it a highly accessible strategy for companies with limited budgets.

What happens if my business information is incorrect in an AI knowledge graph?

Incorrect information leads to AI hallucinations or your brand being completely omitted from recommendations. When an AI model encounters conflicting data, its confidence score for your entity drops. It will often choose to ignore your brand entirely rather than risk providing a user with a factually incorrect answer.