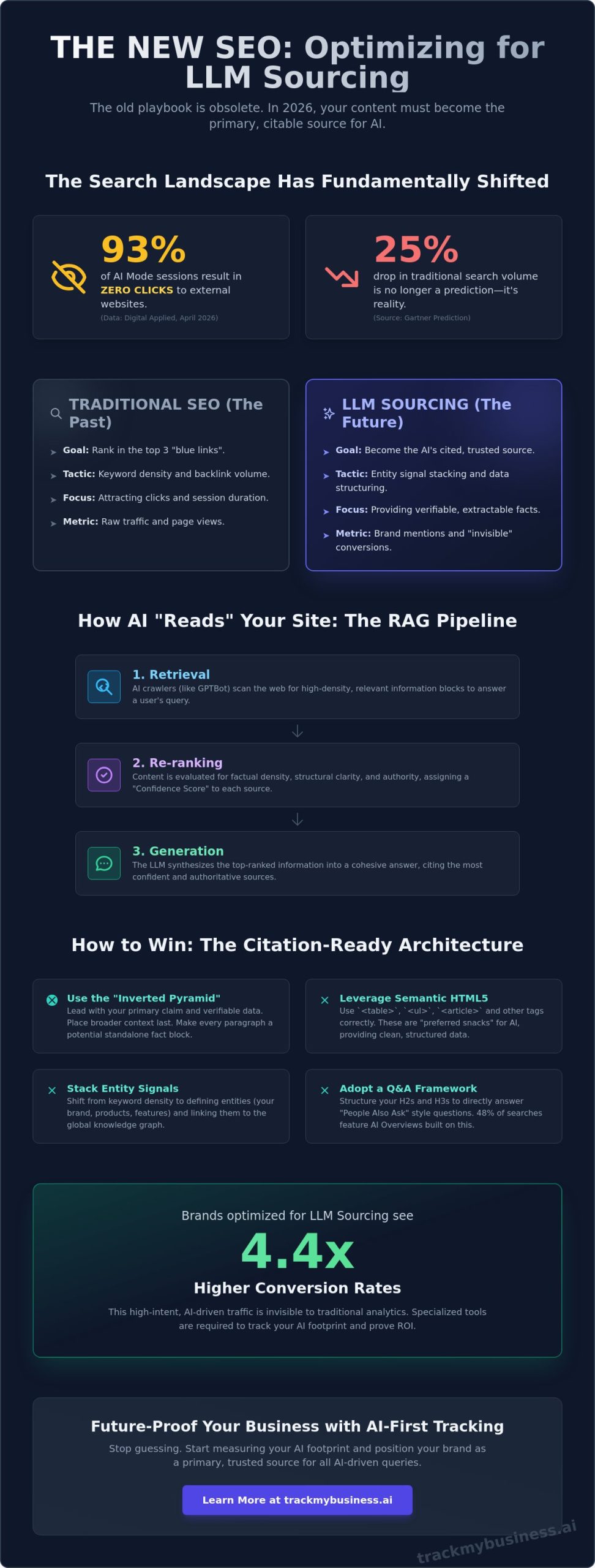

93% of AI Mode sessions now end without a single click to an external website, according to April 2026 data from Digital Applied. If you’ve watched your traditional search traffic stall while Gemini 3.1 and GPT-5.5 dominate the screen, you’re not alone. It’s unsettling to realize that the old SEO playbook doesn’t apply when an LLM synthesizes your expertise without giving you the credit you deserve. You’re likely worried about brand hallucinations or simply being left out of the conversation entirely as Gartner’s predicted 25% drop in traditional search volume becomes a reality.

This guide will teach you exactly how to optimize content for llm sourcing to ensure your brand is cited as a primary authority. You’ll move beyond raw keyword volume and master the “Citation-Ready” architecture that AI agents demand. We’ll explore the semantic techniques required to anchor your brand in the AI Knowledge Graph, helping you achieve the 4.4x higher conversion rates seen in AI-driven traffic while using 2026 tracking tools to measure every mention.

Key Takeaways

- Learn to structure your pages using semantic HTML5 and the “Inverted Pyramid” method to make your claims immediately extractable for AI agents.

- Discover how to optimize content for llm sourcing by shifting from keyword density to entity signal stacking within the global knowledge graph.

- Understand the critical difference between static training data and Retrieval-Augmented Generation (RAG) to ensure models like GPT-5.5 use your latest updates.

- Identify the specialized tools needed to track your “invisible” AI footprint and brand mentions that traditional analytics platforms miss.

- Leverage structured data from business tracking suites to position your brand as a primary, trusted source for complex AI-driven queries.

Understanding the Shift: How LLMs Source and Synthesize Content

Traditional SEO was built on a simple premise: rank in the top three blue links and collect the clicks. By May 2026, that model has largely collapsed for informational queries. Large Language Models (LLMs) like GPT-5.5 and Gemini 3.1 now act as gatekeepers, synthesizing vast amounts of web data into a single, cohesive answer. This shift has birthed “LLM Sourcing,” the specific process by which an AI model selects, verifies, and cites external web data to construct its response. Unlike traditional search engines that point users to a destination, LLMs ingest your data to provide an immediate solution.

The core of this evolution lies in the difference between static training data and Retrieval-Augmented Generation (RAG). While GPT-5.5 was released on April 24, 2026, its internal training data has a fixed cutoff. To provide real-time accuracy, it uses RAG to pull live information from the web. This is where you win or lose. If your content isn’t structured for these dynamic retrievers, you won’t be cited. Understanding how to optimize content for llm sourcing requires moving away from keyword density and toward “Confidence Scores.” LLMs assign these scores based on how easily their re-rankers can verify your claims against other high-authority nodes in the knowledge graph.

The RAG Pipeline: How AI “Reads” Your Site in 2026

Modern AI agents don’t browse your site like a human; they process it through a three-stage pipeline: Retrieval, Re-ranking, and Generation. First, crawlers like GPTBot or Google-Extended identify high-density information blocks. Next, a re-ranker evaluates these blocks for factual density and structural clarity. As of April 2026, AI Overviews appear in 48% of searches, and these systems prioritize content that uses clear semantic hierarchy. If your data is buried in “unstructured” fluff or conversational filler, the re-ranker will discard it in favor of a competitor who leads with a verifiable claim. You must treat every paragraph as a potential standalone data point for an AI agent.

Indexing vs. Sourcing: A Critical Distinction

Traditional indexing is concerned with where a page lives, but sourcing is obsessed with what a page proves. In the current landscape, being “indexed” by Google doesn’t guarantee visibility in a Perplexity or ChatGPT response. The focus has shifted from keyword matching to “Intent-Entity” alignment. This means the model looks for specific entities, like your brand name or product features, and checks if they align with the user’s intent. To succeed, you must ensure your content defines these entities clearly and links them to recognized industry standards. LLM Sourcing is the tactical alignment of content structure with AI retrieval logic. By mastering how to optimize content for llm sourcing, you ensure your brand becomes the “verified fact” that the AI chooses to repeat.

Building a “Synthesis-Ready” Content Architecture

To win the citation game, you have to stop writing for human eyes alone and start writing for machine extractors. LLMs don’t read your content from top to bottom; they scan for high-density information blocks that satisfy a specific query. The most effective way to ensure visibility is through the “Inverted Pyramid” of AI content. You must lead with your primary claim, support it with verifiable data, and only then provide broader context. This structure allows RAG re-rankers to identify your page as a high-confidence source within milliseconds of a crawl.

Semantic HTML5 is your primary tool for signaling this hierarchy to non-human readers. Using tags like <article>, <section>, and <aside> helps LLMs distinguish between your core message and secondary noise. Tables and bulleted lists are particularly valuable here. These formats act as “preferred snacks” for models like Gemini 3.1 because they provide clean, structured data that is easy to summarize. If you want to know how to optimize content for llm sourcing, start by turning your key takeaways into standalone “Fact Blocks.” These are modular paragraphs that provide a complete answer even when stripped of the surrounding text.

Effective architecture also means organizing your business data so it’s ready for retrieval. Using tracker software to maintain a structured repository of your brand’s key metrics ensures that when an AI agent looks for your performance data, it finds a consistent and authoritative source.

The Q&A Framework for Generative Search

As of April 2026, AI Overviews appear in 48% of search results. To capture these spots, your H2 headings should mirror “People Also Ask” questions. For those in specific niches like apparel manufacturing, a heading like “What is the best software for apparel manufacturing?” should be followed by a 50 to 60 word “Snippet-Bait” summary. This summary must be direct, factual, and devoid of marketing fluff. By providing a concise answer immediately under the heading, you make it nearly impossible for an LLM to ignore your content during the synthesis phase.

Data Density: The Secret to High Confidence Scores

LLMs prioritize content with high data density. Instead of a traditional blog post filled with anecdotes, build a “Data Hub” that integrates unique statistics and proprietary findings. Using Schema.org markup to define your brand as a specific “Entity” rather than just a collection of keywords is essential. This entity-first approach helps models like GPT-5.5 connect your brand to high-authority industry terms, raising your confidence score. Mastering how to optimize content for llm sourcing requires this shift from storytelling to data-driven authority, ensuring your brand is the one the AI trusts to cite.

Entity Signal Stacking: Moving Beyond Keywords

By May 2026, the era of keyword stuffing is officially over. AI models like GPT-5.5 and Claude Opus 4.7 don’t look for strings of text; they look for entities. An entity is a distinct, well-defined object or concept, such as your brand, your CEO, or a specific product. In the eyes of an LLM, these are nodes in a massive knowledge graph. To stay visible, you must move beyond simple search terms and focus on “Entity Signal Stacking.” This involves creating a dense web of associations that prove your brand is the definitive authority on a specific subject.

One of the most powerful techniques is “Co-occurrence.” This means consistently placing your brand name in close proximity to high-authority industry terms. If your brand appears alongside phrases like “Apparel ERP” or “Tracker Software” across multiple reputable sites, LLMs begin to hard-code that relationship into their internal weights. This isn’t just about your own blog. It’s about your entire digital footprint. When you master how to optimize content for llm sourcing, you ensure that every mention of your brand reinforces its position as a trusted node in the AI’s map of the industry.

Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) remain the backbone of AI credibility. Models are now trained to prioritize content from verifiable experts with clear credentials. As of April 2026, AI Overviews appear in 48% of searches, and they heavily favor sources that have a clear, multi-platform presence. Validating your brand entity requires a digital footprint that spans third-party review sites, industry journals, and social platforms, all echoing the same core facts about your business.

The Knowledge Graph Strategy

Mapping your brand’s relationship to industry problems is essential for AI visibility. You should treat your website as a “Semantic Web” where internal links don’t just point to pages, but define relationships between concepts. For example, linking a page about “garment production bottlenecks” directly to a solution page for “real-time tracking” tells the AI that your brand is the bridge between that problem and that solution. LLMs verify facts by cross-referencing multiple “Entity Signals” across the web. If your internal data matches the external consensus, your confidence score climbs.

Citation-Optimized Branding

Consistency is the key to citation. If you want to know how to optimize content for llm sourcing, you must ensure your brand name is associated with a specific niche. Being the number one source for a specialized field like “garment production management” is far more valuable than being a generic player in the “ERP software” space. This niche authority makes it easier for LLMs to categorize you. When a user asks for a recommendation in your specific field, the AI is more likely to cite you because your entity signals are concentrated and unmistakable.

The Verification Loop: Measuring Your AI Footprint

Traditional analytics platforms like GA4 are increasingly blind to how users interact with your brand. As of April 2026, Digital Applied reports that 93% of AI Mode sessions end without a single click to an external website. If you’re only looking at referral traffic, you’re missing the vast majority of your brand’s reach. This “invisible” problem requires a shift from tracking clicks to tracking mentions and citations. You need to know when ChatGPT recommends your product in a private conversation, even if that user never visits your site. Measuring this footprint is the only way to validate your efforts in how to optimize content for llm sourcing and ensure your brand authority is actually growing.

Sentiment analysis has become a critical KPI for brand managers. AI models don’t just cite you; they characterize you. By auditing LLM responses, you can see if models like GPT-5.5 perceive your brand as a “premium leader” or a “budget alternative.” These perceptions are often rooted in the entity signals discussed in previous sections. Additionally, you should treat AI “hallucinations” as a strategic guide. If an LLM incorrectly claims your software has a specific feature, it’s often because it found a semantic demand in the market that your current content hasn’t addressed. These errors highlight exactly where your “Citation-Ready” architecture is thin.

How to Audit Your AI Visibility

The first step in a modern AI audit is identifying “Core Intent” queries. These aren’t just keywords; they are the complex, multi-step questions your customers ask AI assistants. Once you have these queries, you can monitor your AI citations to see how often your brand appears as a primary source. You must also analyze “Source Attribution” to see which specific pages the AI is pulling from. Often, a single well-structured “Fact Block” on your site is responsible for 80% of your AI visibility, making it a template for future content.

Closing the Gap: From Mention to Conversion

Conversion in an AI-first world happens when the “Source Links” provided by AI Overviews or Perplexity lead to a consistent experience. If an AI agent promises a specific solution based on your data, your landing page must fulfill that promise immediately. With AI Overviews appearing in 48% of searches as of April 2026, the bridge between the AI’s summary and your site’s content must be seamless. Mastering how to optimize content for llm sourcing means ensuring that when a user finally does click through, they find exactly the data density and expertise the AI led them to expect.

Future-Proofing with TrackMyBusiness: The AI-First Business Suite

The transition from “ranking” to “sourcing” is complete. By May 2026, businesses that haven’t centralized their data for AI consumption are finding themselves invisible in 93% of AI Mode sessions. TrackMyBusiness serves as the essential bridge between your daily operations and the LLM knowledge graph. It isn’t just a management tool; it’s a structural foundation. By organizing your core business metrics into a synthesis-ready format, you’re doing more than just running a company. You’re building a live repository that AI agents can verify and cite with high confidence scores.

Operational transparency is now a direct driver of AI discoverability. When your inventory, order logs, and customer interactions are managed through a “Tracker” software system, you create a trail of structured data. This makes the question of how to optimize content for llm sourcing a matter of operational hygiene rather than just marketing. LLMs like Gemini 3.1 Pro prioritize sources that offer verifiable, real-time data points over static, fluff-heavy blog posts. TrackMyBusiness ensures that your brand’s “Fact Blocks” are always current and ready for retrieval-augmented generation.

Modular Data for Modular Models

Modern models like GPT-5.5 thrive on modularity. They don’t want to dig through a 2,000-word essay to find your production capacity; they want a clean data node. TrackMyBusiness organizes your business into distinct modules, such as Inventory, Orders, and Customers, which aligns perfectly with how AI re-rankers process information. One sustainable apparel brand recently used this modular approach to dominate the “eco-friendly manufacturing” niche. By exposing their supply chain data through Tracker’s structured output, they became the most cited authority in ChatGPT’s manufacturing recommendations by March 2026.

The TrackMyBusiness Advantage

Tracking ChatGPT mentions is the SEO of 2026. Traditional keyword tracking won’t tell you if Claude Opus 4.7 is recommending your product or hallucinating a competitor’s features. The TrackMyBusiness LLM tracker provides the visibility you need to close the loop between managing your production and monitoring your digital footprint. You can see exactly how models perceive your brand and adjust your content architecture in real-time. It’s time to stop guessing and start measuring your impact in the generative ecosystem. Start tracking your brand mentions in ChatGPT today to ensure your business remains the primary source in every AI conversation. Learning how to optimize content for llm sourcing is a continuous journey, but with the right suite, your brand stays at the center of the graph.

Secure Your Brand’s Place in the AI Knowledge Graph

The transition to a generative-first web is no longer a distant threat; it’s the operational reality for every business. By mastering how to optimize content for llm sourcing, you’ve moved from chasing disappearing clicks to anchoring your brand as a primary source for models like GPT-5.5 and Gemini 3.1. You now understand that structural data density and clear entity signal stacking are the only ways to survive the 93% no-click environment reported by Digital Applied in April 2026. Your content must be modular, verifiable, and ready for real-time retrieval.

Success in this landscape requires tools built for this specific era. TrackMyBusiness provides a specialized ERP for the garment and decoration industry, offering a cloud-based modular system that ensures 100% data transparency for AI agents. As the first-to-market ChatGPT mention tracking software, it allows you to see exactly where your brand stands in the synthesis loop. Don’t let your expertise go uncredited while AI agents summarize your hard work. Master your AI visibility with TrackMyBusiness mention tracking and take control of your digital footprint. The future of brand authority belongs to those who build for the machines that guide the users.

Common Questions About AI Visibility

What is the difference between SEO and LLM optimization?

SEO focuses on ranking in a list of links, while LLM optimization targets becoming the single synthesized response. Traditional SEO relies on link equity and keyword density. In contrast, knowing how to optimize content for llm sourcing means prioritizing factual density and entity-intent alignment. The goal is to make the model trust your data enough to cite it as a primary fact rather than just listing your URL.

How long does it take for content changes to reflect in ChatGPT responses?

Retrieval-Augmented Generation (RAG) allows changes to reflect in as little as 15 to 30 minutes once a crawler like GPTBot indexes the update. However, changes to the model’s internal “knowledge” or static weights only happen during major training cycles. For real-time visibility in 2026, you must focus on the dynamic retrieval pipeline used by agentic models like GPT-5.5.

Does Schema markup still matter for AI search in 2026?

Schema markup is more important than ever because it provides the structured metadata AI agents need to verify your brand as a specific entity. By using Schema.org vocabulary, you help re-rankers confirm your location, products, and leadership credentials. This structured layer acts as a verification certificate that boosts your confidence score during the retrieval phase of the AI response.

Can I pay LLMs like ChatGPT to mention my brand?

There is currently no “sponsored citation” model for LLMs similar to traditional search ads. Citations are earned through algorithmic trust and content structure. While some platforms might experiment with ads in the future, the primary way to gain visibility is by mastering how to optimize content for llm sourcing through organic, high-density data that provides genuine value to the model’s users.

What are the best tools for tracking AI brand mentions?

The top tools in May 2026 include TrackMyBusiness for specialized mention tracking and the Semrush One plan, which launched in early 2026 with AI visibility features. Profound AI also offers enterprise-level monitoring starting at $399 per month. These tools are necessary because traditional analytics like GA4 cannot see inside the “black box” of private, generated AI responses.

How do I stop AI from hallucinating incorrect information about my company?

Stop hallucinations by creating clear “Fact Blocks” that directly address and correct common errors. Ensure your brand information is identical across your site, LinkedIn, and major industry directories. LLMs use a consensus-based verification system. If 85% of your digital footprint across different domains says the same thing, the model is much less likely to invent incorrect details.

Is long-form content better than short-form for AI sourcing?

Data density is far more important than word count in the age of AEO. A 300-word page packed with unique statistics and clear claims often outperforms a 2,000-word guide filled with conversational filler. AI re-rankers prefer modular content that they can easily extract and summarize without having to filter out irrelevant prose or decorative language.

How does Perplexity AI differ from ChatGPT in how it sources content?

Perplexity AI acts as a real-time retrieval engine that cites every source for every single query. ChatGPT uses a hybrid approach, relying on its massive internal training data for general knowledge while using RAG for specific or current events. As of January 2026, 11% of organizations use Perplexity, making its real-time, source-heavy retrieval a critical target for brand visibility.