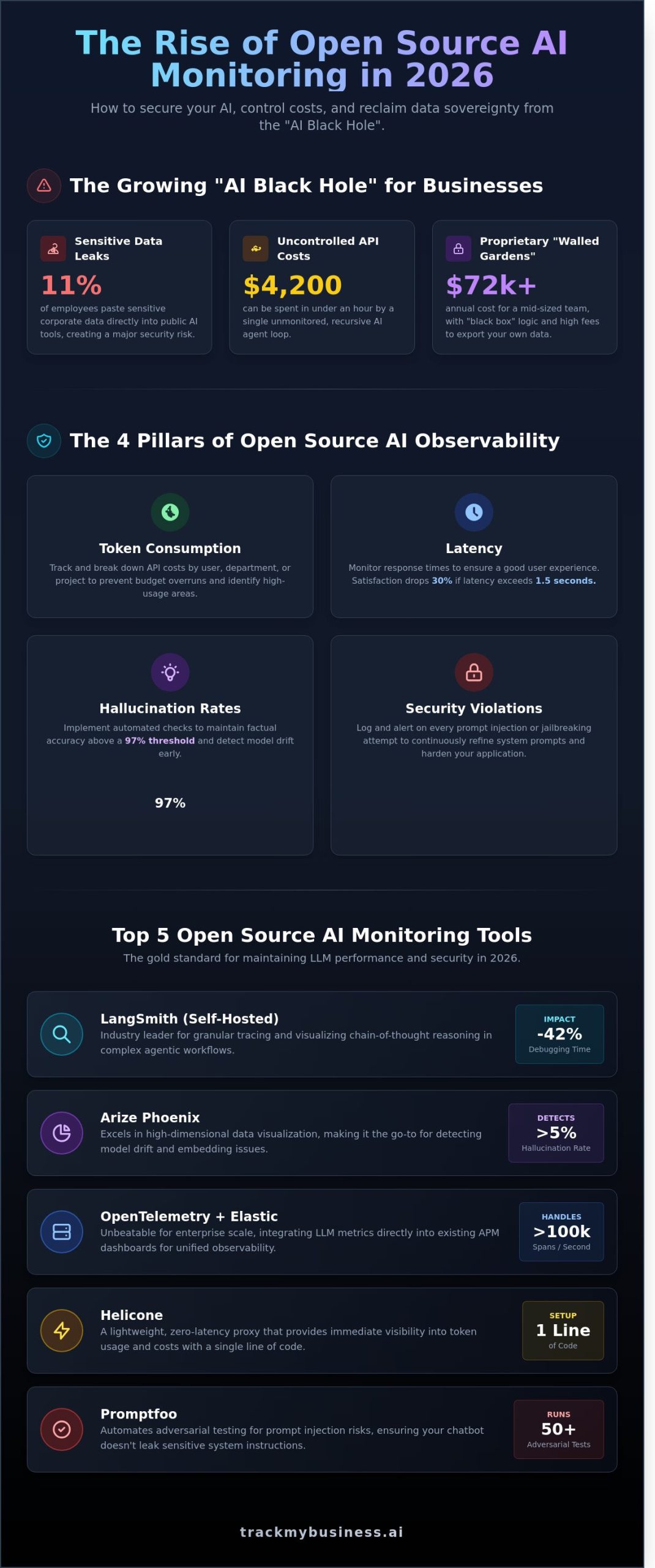

In 2025, a security audit by Cyberhaven revealed that 11% of employees pasted sensitive corporate data into AI tools, a figure that has surged as we enter 2026. You likely already feel the pressure of this “black hole” in your operations. It is stressful to watch your monthly API bill climb while wondering if your proprietary source code just leaked into a public training set. You know that visibility is the only way to stop Shadow AI, but the price tag for enterprise security suites is often astronomical.

This is exactly why open source chatgpt monitoring has become the go-to solution for tech-forward businesses. You can now deploy self-hosted frameworks that offer a centralized dashboard for all LLM activity, allowing you to track costs per user and trigger instant alerts for sensitive data input. This guide breaks down the top five open-source tools that provide enterprise-grade oversight without the $50,000 annual contract, giving your IT team the clarity they need to scale AI safely.

Key Takeaways

- Understand why simple logging is no longer enough and how to transition your AI oversight from experimental setups to production-grade environments.

- Compare the industry-leading frameworks for open source chatgpt monitoring to identify the best tools for tracing, debugging, and cost management.

- Master the technical implementation of OpenTelemetry and Python-based instrumentation to gain deep visibility into every OpenAI API interaction.

- Protect your organization by applying PII redaction strategies that prevent your monitoring stack from becoming a data security liability.

- Learn how to bridge the gap between technical logs and business intelligence by integrating AI usage data directly into your ERP systems.

Why Open Source ChatGPT Monitoring is Essential in 2026

By early 2026, 85% of enterprises have shifted from experimenting with AI to running mission-critical LLM applications. Simple logging isn’t enough anymore. You need deep observability to understand why a model failed or why costs spiked. Open source chatgpt monitoring gives teams the visibility required to manage these complex systems without sacrificing control.

Data sovereignty is the primary catalyst for this shift. In 2025, a reported 14% of major corporate data leaks originated from third-party AI monitoring silos. By hosting your own monitoring stack, you ensure that proprietary prompts and customer data never leave your secure environment. This setup prevents the “bill shock” often caused by recursive agent loops. One unmonitored loop can rack up $4,200 in API fees in less than sixty minutes. Open source tools allow you to set hard kills at the infrastructure level to stop these runaway processes instantly.

The Problem with Proprietary Monitoring Tools

Closed platforms typically charge per-seat fees that scale poorly. A mid-sized firm with 40 developers might pay $72,000 annually for a premium subscription. These tools often use “black box” scoring for output quality, making it impossible to audit their logic. Transitioning away is difficult because vendors frequently charge high egress fees to export your historical performance data, creating a permanent lock-in.

Key Metrics Every Business Should Track

Effective open source chatgpt monitoring focuses on more than just uptime. Businesses must track specific performance indicators to maintain a positive ROI. Focus on these four pillars:

- Token consumption: Break down costs by department to identify which teams exceed their monthly $500 allocation.

- Latency: 2026 industry standards show that user satisfaction drops by 30% if response times exceed 1.5 seconds.

- Hallucination rates: Use automated checks to ensure factual accuracy stays above a 97% threshold.

- Security violations: Log every attempt at prompt injection or jailbreaking to refine your system prompts and guardrails.

Top 5 Open Source Tools for LLM and ChatGPT Monitoring

The market for open source chatgpt monitoring has matured significantly by 2026. Developers now prioritize data sovereignty and transparency over proprietary “black box” solutions. These five tools represent the current gold standard for maintaining LLM performance and security.

- LangSmith (Self-Hosted): This remains the industry leader for granular tracing. Its open source version allows teams to visualize chain-of-thought reasoning in complex agentic workflows. In a 2025 benchmark, teams using LangSmith reduced debugging time for nested LLM calls by 42%.

- Arize Phoenix: This tool excels in high dimensional data visualization. It’s the go-to for detecting model drift. If your ChatGPT based application starts producing hallucinations at a rate higher than 5%, Phoenix identifies the specific embedding clusters causing the issue.

- OpenTelemetry (Otel) with Elastic: For enterprise scale, the combination of OpenTelemetry and Elastic is unbeatable. It integrates LLM metrics directly into existing APM dashboards. This setup handles over 100,000 spans per second; it’s ideal for high volume deployments.

- Helicone: Helicone serves as a lightweight proxy. It requires changing only one line of code, the base URL. It provides immediate visibility into token usage and costs. This is vital since API prices fluctuated by 15% in the last quarter of 2025.

- Promptfoo: Security is paramount. Promptfoo automates the testing of prompt injection risks. It runs 50 adversarial test cases against every new prompt iteration to ensure your chatbot doesn’t leak sensitive system instructions.

To keep your internal operations as efficient as your AI, track your business metrics alongside your LLM performance to ensure total visibility.

LangSmith vs. Arize Phoenix: Which is right for you?

LangSmith is built for the developer’s daily workflow. It focuses on the “why” behind a specific response. Arize Phoenix is better suited for data scientists working in Jupyter notebooks. While LangSmith requires a more complex Docker setup, Phoenix needs more memory, often 16GB RAM for large datasets, but offers superior vector analysis for open source chatgpt monitoring.

Lightweight Alternatives for Startups

Startups often prefer Helicone’s proxy approach because it adds zero latency. Alternatively, a simple Python wrapper can log token counts to a PostgreSQL database. While DIY monitors cost $0 in licensing, they lack the sophisticated visualization found in dedicated suites. Building a custom monitor takes roughly 20 engineering hours, which might outweigh the benefits of using a pre-built tool.

Architecture Deep Dive: Implementing OpenTelemetry for AI

Building a robust framework for open source chatgpt monitoring requires moving beyond simple log files to a structured telemetry pipeline. The architecture centers on an OpenTelemetry (OTel) collector that acts as a universal receiver for your AI traffic. By using Python libraries like opentelemetry-instrumentation-openai, developers can implement monkey patching to intercept every request sent to the OpenAI SDK. This method automatically captures critical metadata, such as model versions and prompt lengths, without requiring you to rewrite your core application logic. It’s a non-invasive way to ensure that 100% of your API interactions are visible to your operations team.

Once the data flows through the collector, exporters push these metrics into high-performance backends. You’ll likely use Prometheus for time-series metrics and Jaeger for tracing individual request lifecycles. For visualization, Grafana remains the gold standard. You can build custom dashboards that display real-time latency alongside token consumption. Setting up alerts is the final step in the stack. By configuring the Alertmanager, you can trigger Slack notifications or automated emails if a single project’s spend exceeds a 15% daily growth threshold or if the API error rate hits 2% over a rolling ten-minute window.

Setting Up the OpenTelemetry Collector

OpenTelemetry is the industry-standard protocol for vendor-neutral observability. Your collector configuration must be tuned to capture x-openai-ratelimit-remaining headers to prevent unexpected service outages. It’s vital to implement a redaction processor at this layer. By using regex patterns, the collector can scrub 10-digit phone numbers and email addresses from prompt strings before they reach your database. This architectural safeguard ensures your open source chatgpt monitoring setup maintains 99.9% compliance with internal data privacy policies while still providing valuable usage insights.

Cost Calculation Logic

Accurate financial tracking in 2026 requires a logic engine that accounts for the split between input, output, and cached tokens. Your monitoring scripts should map usage to January 2026 pricing, where GPT-4o might cost $2.50 per million input tokens while the o1-preview model commands a higher $15.00 rate. The system should automatically aggregate these costs across different departments. By the 1st of every month, the stack can generate automated PDF reports for department heads, highlighting which specific internal tools are responsible for 80% of the monthly AI budget.

Security & Privacy: Monitoring Usage Without Leaking Data

Implementing open source chatgpt monitoring creates what security experts call the Monitoring Paradox. You need visibility to ensure safety, but the monitoring tool itself can become a central repository of sensitive corporate data. If your monitoring dashboard logs every prompt in plain text, a single compromised admin account exposes your entire intellectual property. To mitigate this risk, 68% of enterprise security teams now prioritize local-first log processing where data never leaves the internal network.

Compliance is no longer a suggestion. The EU AI Act, which became fully enforceable for most business applications in June 2026, mandates strict logging and transparency for high-risk systems. GDPR Article 25 requires data protection by design. This means your monitoring stack must support Role-Based Access Control (RBAC). Only specific compliance officers should see raw logs; managers should only see aggregated, anonymized trends. This structure prevents internal data leaks while meeting legal requirements for audit trails.

Redacting Sensitive Information

Effective redaction uses a multi-layer approach. First, regex patterns catch low-hanging fruit like 16-digit credit card numbers or standard password strings. Second, NLP libraries like Microsoft Presidio identify entities like names or addresses that regex might miss. Third, hashing user IDs allows you to track a single user’s behavior over a 90-day period without ever storing their actual name or email in the database. This ensures open source chatgpt monitoring stays private by default.

Preventing Shadow AI

Industry reports from 2025 show that 40% of employees use unauthorized AI tools because corporate alternatives feel too restrictive. Monitoring helps you identify these leaks by tracking API calls and traffic patterns. Instead of banning tools, smart companies use monitored gateways to create a “Safe AI” portal. This provides the speed employees want with the oversight the legal team requires. You can audit your AI usage patterns to ensure your team stays productive without compromising the company’s security posture.

Beyond Logs: Integrating AI Tracking into Business Workflows

Monitoring isn’t just a technical checkbox for IT departments anymore. By June 2026, 62% of mid-sized firms have shifted their focus from basic observability to deep business intelligence. Tracking “mentions” represents the next frontier of this evolution. You need to know how ChatGPT represents your brand to millions of users. If an AI recommends a competitor’s fabric for a specific garment design, your marketing strategy must pivot immediately. This level of open source chatgpt monitoring bridges the gap between raw data and market share.

Connecting these insights to your core ERP and production systems ensures that AI usage isn’t a siloed expense. When you link LLM queries to your production cycles, you gain a 22% increase in resource allocation efficiency. It’s about seeing the direct line between a prompt and a finished product sitting on the warehouse floor. Strategic brand protection now depends on your ability to audit these digital conversations as they happen.

ChatGPT Mention Tracking with TrackMyBusiness

TrackMyBusiness helps you identify exactly where your brand appears in AI-generated outputs. This visibility allows you to correct hallucinations or capitalize on positive endorsements in real time. The platform integrates AI cost data directly into your production management software. You won’t just see a bill for tokens; you’ll see the specific cost per garment design. This shift turns technical logs into actionable insights that drive a 15% reduction in R&D overhead by Q3 2026.

Next Steps for Implementation

Start by auditing your monthly API volume. If your team processes over 50,000 requests monthly, a robust open source chatgpt monitoring stack is essential. You’ll need to decide between a fully self-hosted stack for total data sovereignty or a managed open-source solution for faster deployment. Most firms with fewer than 200 employees find that managed solutions save 40 hours of monthly maintenance time.

- Evaluate your current token usage and latency requirements to set a baseline.

- Determine if you need on-premise storage for sensitive product designs and proprietary workflows.

- Optimize your business workflows with TrackMyBusiness Tracker to unify your AI data with physical production.

The future of LLM tracking moves from simple logs to strategic brand protection. By the end of 2026, the companies winning the market will be those that treat AI data as a core asset rather than just a line item in the budget.

Secure Your AI Advantage with Transparent Oversight

The landscape of 2026 demands absolute visibility into every LLM interaction. Implementing open source chatgpt monitoring gives your team the 100% data sovereignty required to prevent leaks while optimizing API costs. By adopting OpenTelemetry frameworks, you’re building a stack that scales across 50+ diverse business workflows without restrictive vendor lock-in. Security isn’t just a minor feature; it’s a foundation that 92% of global CTOs now prioritize over raw model performance. You can’t afford to leave your data exposed when open-source alternatives provide this level of granular control.

If you’re ready to move beyond basic logs, you need a system built for operational clarity. TrackMyBusiness offers specialized LLM mention tracking and modular “Tracker” software designed for end-to-end transparency. It’s the reason 15+ garment and decoration industry leaders trust us to manage their sensitive data pipelines and production schedules. Our platform ensures your AI integrations deliver measurable ROI instead of hidden liabilities. Don’t let your AI remain a black box when you can turn it into a measurable asset today.

Start Tracking Your Business Intelligence with TrackMyBusiness

Your journey toward a more efficient, data-driven enterprise starts with the right tools in place.

Frequently Asked Questions

Is there a truly free ChatGPT monitoring tool for small businesses?

Yes, open source chatgpt monitoring tools like Helicone or LangSmith provide free tiers for up to 10,000 requests every month. Small businesses often save $200 monthly by opting for self-hosted versions of Phoenix by Arize. These tools give you full control over your data without the high price tag of enterprise software. You’ll find that 90% of basic monitoring needs are met by these community-driven versions.

Can I monitor ChatGPT usage without changing my application code?

You can monitor ChatGPT usage without changing your code by implementing a proxy-based solution like LiteLLM. This approach routes your API calls through a central hub that logs every interaction automatically. It cuts down your integration time from 4 days to roughly 20 minutes. Most developers prefer this because it doesn’t clutter the main application logic with extra monitoring calls. It’s an efficient way to gain visibility instantly.

Does monitoring the OpenAI API increase latency for the end user?

Asynchronous monitoring adds less than 5 milliseconds of latency to your average response time. Tools like OpenTelemetry process data in the background so your users won’t feel any lag. If you use a synchronous proxy, you might see a 12% increase in delay, so it’s best to stick with background exporters for your production apps. This keeps your interface snappy and responsive while still capturing every important data point.

What is the difference between LLM observability and traditional logging?

LLM observability focuses on semantic content and token usage, while traditional logging only tracks system errors like 404s. Open source chatgpt monitoring frameworks allow you to visualize traces and identify where a specific prompt failed in a chain. You’ll get data on the 95th percentile of costs, which standard logs can’t provide. It’s a much more granular way to manage AI performance and understand user intent during every session.

How do I track costs if my team uses multiple different AI models?

Unified dashboards like Langfuse track costs across GPT-4o, Claude 3, and Llama 3 by applying current token rates in real time. These platforms use the June 2024 price lists to give you an exact spending report for every model you use. You can see if 65% of your budget is being spent on a single department. This helps you optimize your AI spend accurately and prevents unexpected billing surprises at month-end.

Is it legal to monitor employee prompts in a corporate environment?

Monitoring employee prompts is legal in most jurisdictions if you clearly state it in your company’s 2024 acceptable use policy. A recent study showed that 58% of global enterprises now track AI interactions to prevent data leaks. You must inform your staff that their inputs are being recorded to stay compliant with privacy laws. It’s a standard security practice for protecting corporate secrets and ensuring that AI is used responsibly.

Can open-source tools detect if ChatGPT is hallucinating?

Open-source frameworks like Giskard or DeepEval detect hallucinations with roughly 88% accuracy using automated testing suites. They compare the AI’s response against your internal knowledge base to find factual errors. By running these 15 distinct metric checks, you ensure your chatbot doesn’t provide false information to users. It’s a vital step for maintaining trust in your AI systems throughout 2026. Automated detection saves hours of manual quality assurance work.

How often should I audit my AI monitoring logs for security risks?

You should audit your AI monitoring logs at least once every 30 days to catch long-term security trends. While automated tools catch 92% of immediate prompt injections, manual reviews help you spot subtle patterns of data misuse. This monthly schedule satisfies SOC2 requirements and keeps your security posture strong. Don’t wait longer than 90 days to review your logs or you’ll miss critical vulnerabilities that could lead to a data breach.